Is it OK for a Chatbot to Impersonate a Human?

Recently I wrote that it’s in our best interest to be polite to robots. But do the robots have to be nice to us? What are the standards of…

Recently I wrote that it’s in our best interest to be polite to robots. But do the robots have to be nice to us? What are the standards of behavior for robots dealing with humans?

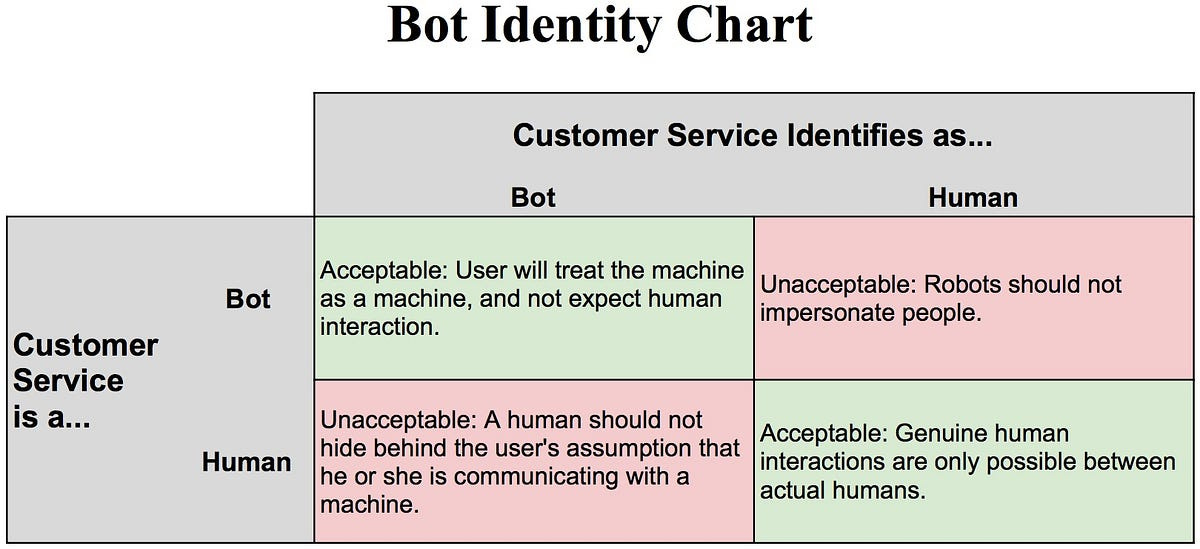

This is a big question, so let’s look at one specific, fundamental issue of robot etiquette: Must a robot identify itself as a robot? For example, does a customer-service text chatbot need to identify itself as a bot, even though it’s been explicitly programmed to mimic human conversational behavior?

Does an AI Have to Identify Itself as Such?

Yes. A robot should not try to impersonate a human. We have certain expectations when talking to people, and robots are not people.

Unlike standard-issue humans, most bots have extraordinarily limited areas of expertise, and don’t (yet) have emotions. We don’t need to spend time sweet-talking a customer-service bot at UPS when we’re trying to get a package re-directed. If we do try to butter up a bot, thinking it’s a person, and it starts to get confused or throw back off-key responses, our experience will be worse than if we were just talking to the robot as if it were a robot. And when we find out we’ve been talking to a bot, we will be angry.

We should not be expected to emotionally invest ourselves in a conversation if the investment won’t be reciprocated.

Imitating human behavior is a different matter, and it’s not bad. Robots created to interact with humans should talk the way we do, so we don’t have to learn a new language. If we say please and thank you to a robot, it should recognize our politeness. If we swear at it, it should be within its programming to respond curtly, perhaps saying, “It’s not necessary to use that language, I am just a robot doing the best I can. Would you like to chat with a human?”

The Case of the Fake AI

What if you’re talking to a customer-service bot and you expect it to be a robot, but it’s actually a human? Is it acceptable for the human in that role to hide behind that assumption and let the person on the other end think they’re talking to a robot?

No. Because humans may choose to reveal different things to machines than to people. A person taking a medical survey online may be more willing to give up personal information if they believe their activity isn’t being watched by a human. To illustrate, try this thought experiment: Imagine your Internet service provider was a human who saw every Web address you typed, as you typed it. See what I mean?

We also expect certain things from robots that humans can’t deliver: Extremely rapid and accurate responses, at the very least. Robots may be getting better every day at imitating humans, but humans aren’t getting better at imitating robots.

Yet the more AI bots get out there, the more humans we get impersonating them. In particular, during the development of some AI products, programmers may replace or augment their AI algorithms with humans in order to learn, first-hand, how their customers are using the service. This practice is called Wizard of Oz development, since the users of the service don’t know that instead of an all-powerful machine behind the curtain, what they’re getting is just a programmer trying to keep up.

So if you’re ever chatting with what you think is a bot, and the responses came back slowly or with odd humanistic artifacts in them, like typos or colloquialisms, you may actually be dealing with a human. Who will likely be embarrassed if you call him or her out. Nobody likes being caught in a lie.

Rafe Needleman has been a technology journalist for more than 20 years. He was the editor of Byte Magazine, Red Herring Online, CNET Reviews, Yahoo Tech, Make Magazine, and other publications. Rafe is currently an editorial strategist at Cisco; the views expressed are his own.

For more Tech Etiquette tips, follow Rafe’s Medium publication, Caller Calls Back.